Virtual Documents: Conversational Interfaces that Generate Natural Language Explanations

About the time that the Web first came online in 1993, Tom was doing research at Stanford to build AI systems that could understand how things work — in particular, electromechanical systems that were designed by engineers. The goal wasn’t to replace engineers, but to give them tools that would help capture their design intent and assumptions about how the device should operate, so that the machine could help other humans understand at scale.

Space exploration, with its attendant complexities of scale, gave the researchers a great test case. NASA would commision very large systems like the Hubble Space Telescope, which was designed and built by thousands of people over a decade, most of whom had moved on to other jobs, and would be operated by many more people for another decade. The documentation created during the construction of the telescope could never anticipate all the situations that might arise over its lifetime.

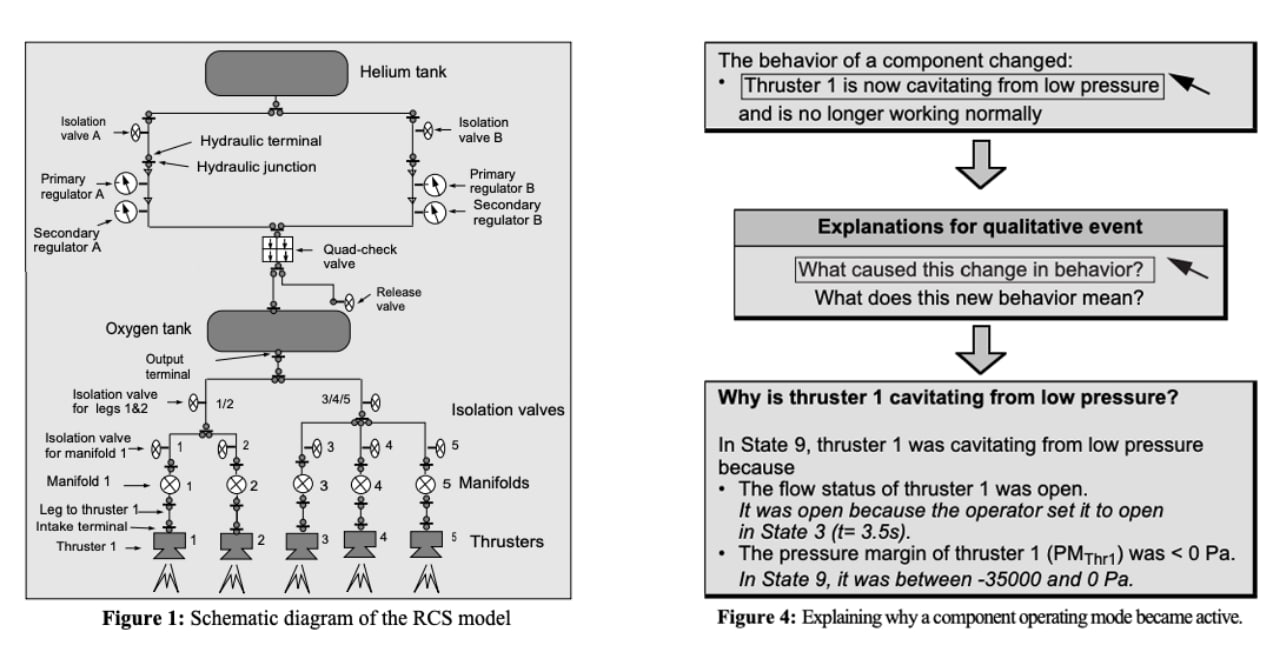

For this problem, Tom partnered with colleagues who were experts in engineering modeling. The experts could build systems that knew how to simulate the operations of spacecraft such as the Hubble telescope. Tom’s job was to bring the simulation to life with an interface that would allow humans to learn about how it works and how to operate it in a myriad of possible scenarios. The goal was to make something as easy to understand as human-written documentation, but driven by real engineering models (e.g., partial differential equations) and databases of how all of the component parts were combined in the spacecraft.

The solution was to use simulations of the engineered object’s behavior to drive explanations, in natural human language, of how it works. These explanations were paragraphs of text in which every noun, phrase and sentence were hyperlinks. If the user clicked on a hyperlink, the program would generate a set of follow-up questions in natural language about the thing or phenomenon being described. For example, clicking on a noun describing a valve on the spacecraft would lead to questions such as “what is this part?” and “what happens if I open this valve?” Clicking on these links would lead to new explanations, which would also be full of links to follow-up questions.

This interface and interaction paradigm was inspired by a combination of AI methods for generating qualitative explanations of how things work with hypertext interaction interface techniques. It offered the familiarity of natural language without requiring that users know how to ask questions that the system could answer.

Virtual Documents

The UI was built on top of a hypertext framework that was familiar to users of the expensive AI workstations of the day. In fact, the very earliest Web browsers were built on top of this class of workstation, packaging the ideas of the expensive hypertext APIs into a publishing paradigm (HTML) and building universal free clients that rendered the UI.

Ironically, as the researchers were showing their AI-based Intelligent UI work to potential funders, they were also introducing these funders to the Web. They were astonished by the possibilities. The research team quickly realized that they should try to implement this experience to run in Web browsers. So they (mostly James Rice and Patrice Gautier) wrote an HTTP server from scratch and an HTML interface to the system so that it could be put on the Web.

The result was revealing. The workstation version of this interface may have been seen by 20-30 people. Within the first few months of publication online — with absolutely no marketing or outreach — over 30,000 users had tried it out. That was a thousand-fold increase in reach, overnight.

The writing was on the wall. From then on, interesting interactive user interfaces would live on the Web and be available to anyone.

Tom coined the term virtual documents to refer to this new class of interface that generates both sides of a conversation about its domain — the questions and the answers — presented in a dynamic hypertext interface. The user of a virtual document “asks” these questions not by typing in text, but by selecting from sets of follow-up questions that the system proposes. The system answers the questions chosen by the user in natural language and hyperlinked diagrams. In this way the document unfolds as it is consumed, allowing the user to explore any aspect of the system that is modeled in the system. In effect, a virtual document is a conversational natural-language interface without speech and natural language understanding (NLU). It would be another 16 years before speech and NLU were brought to the mainstream.

Tom became an early advocate for the Web and gave talks at Stanford and elsewhere describing the virtual-document interaction as a new medium to share knowledge. The idea that the Web was a new UI paradigm wasn’t lost on the early pioneers, many of whom were starting companies like Netscape and Amazon. Tom saw that the old days of having impact through demos and academic publishing were about to change forever. It was then that he left Stanford to join e-commerce pioneer Marty Tenenbaum in EIT (later CommerceNet), a leader in establishing the standards that made the web safe for commerce.

See Also

Thomas Gruber, Sunil Vemuri, and James Rice (1995). Model-Based Virtual Document Generation. International Journal of Human-Computer Studies, Volume 46 , Issue 6 (June 1997). Special issue: innovative applications of the World Wide Web. ISSN:1071-5819. Describes the use of the web as a medium for virtual documents that generate natural language explanations of how things work.

Thomas R. Gruber and Patrice O. Gautier. (1993). Machine-generated Explanations of Engineering Models: A Compositional Modeling Approach. Proceedings of the 13th International Joint Conference on Artificial Intelligence, Chambery, France, pages 1502-1508, San Mateo, CA: Morgan Kaufmann, 1993.

Patrice O. Gautier and Thomas R. Gruber (1993). Generating Explanations of Device Behavior Using Compositional Modeling and Causal Ordering. Proceedings of the Eleventh National Conference on Artificial Intelligence, Washington, D.C., AAAI Press/The MIT Press, 1993.

The Use of Formally-Represented Engineering Knowledge to Support Human Communication and Memory Thomas R. Gruber. Presented at the 1990 AAAI Spring Symposium on Knowledge-based Human-Computer Communication, Stanford University, March 27-29, 1990

Thomas R. Gruber and Daniel M Russell. (1992). Generative Design Rationale: Beyond the Record and Replay Paradigm. In T. Moran and J. H. Carroll (Eds.), Design Rationale: Concepts, Techniques, and Use. Lawrence Erlbaum Associates, 1995, pp. 323 – 349. ISBN:0-8058-1567-8. Originally written in 1992, on the web in 1993, in print in 1995!